doi: 10.56294/hl2023219

ORIGINAL

Analyzing the Impact of Healthcare Educational Initiatives on the Quality of Professional Practice

Análisis del impacto de las iniciativas educativas sanitarias en la calidad de la práctica profesional

Arjit Tomar1

![]() , Satyabhusan Senapati2

, Satyabhusan Senapati2

![]() , Nayana Borah3

, Nayana Borah3

![]() , Uddhav T. C4

, Uddhav T. C4

![]() , Suraj Rajesh Karpe5

, Suraj Rajesh Karpe5

1Noida International University, Department of Computer Science & Engineering. Greater Noida, Uttar Pradesh, India.

2IMS and SUM Hospital, Siksha ‘O’ Anusandhan (Deemed to be University), Department of Neurosurgery. Bhubaneswar, Odisha, India.

3School of Sciences, JAIN (Deemed-to-be University), Department of Life Sciences. Bangalore, Karnataka, India.

4Krishna Institute of Medical Sciences, Krishna Vishwa Vidyapeeth “Deemed to be University”, Department of Community Medicine. Taluka-Karad, Dist-Satara, Maharashtra, India.

5CSMSS Chh Shahu College of Engineering, Department of Electrical Engineering. Chh Sambhaji Nagar. India.

Cite as: Tomar A, Senapati S, Borah N, T. C U, Karpe SR. Analyzing the Impact of Healthcare Educational Initiatives on the Quality of Professional Practice. Health Leadership and Quality of Life. 2023; 2:219. https://doi.org/10.56294/hl2023219

Submitted: 29-05-2023 Revised: 31-08-2023 Accepted: 10-11-2023 Published: 11-11-2023

Editor:

PhD.

Prof. Neela Satheesh ![]()

ABSTRACT

Introduction: the purpose of this study is to find out if healthcare training programs can improve the level of professional work among healthcare providers. As the need for better patient care and ongoing professional development grows, educational approaches are becoming more and more seen as essential ways to give doctors more advanced knowledge and skills.

Method: both numeric and qualitative data were used in a mixed-methods technique. We looked at 30 peer-reviewed studies that came out between 2015 and 2023 and did a meta-analysis of results linked to clinical performance and the level of patient care. Also, 50 healthcare workers who took part in these training events were interviewed in a semi-structured way.

Results: the results of the meta-analysis showed that clinical skills and patient outcomes got better, and the effect size was modest (Cohen’s d = 0,5). Qualitative results showed that subjects said the main benefits were more trust and more up-to-date information. When digital tools were used in training, people were more interested and remembered more of what they learnt.

Conclusions: healthcare training programs are very important for improving the standard of clinical practice. The results show that these kinds of programs should continue to get money, with a focus on using digital tools to improve learning and effects. More study needs to be done on how these teaching tools work over time and whether they can be used in a wide range of hospital situations.

Keywords: Healthcare Education; Professional Practice Improvement; Clinical Skills Enhancement; Patient Care Outcomes; Digital Learning Tools; Continuous Professional Development.

RESUMEN

Introducción: el objetivo de este estudio es

averiguar si los programas de formación sanitaria pueden mejorar el nivel de

trabajo profesional de los profesionales sanitarios. A medida que aumenta la

necesidad de mejorar la atención al paciente y el desarrollo profesional

continuo, los enfoques educativos se consideran cada vez más como medios

esenciales para dotar a los médicos de conocimientos y habilidades más

avanzados.

Método: se utilizaron datos numéricos y cualitativos en una técnica de métodos mixtos. Se analizaron 30 estudios revisados por pares que se publicaron entre 2015 y 2023 y se realizó un metaanálisis de los resultados relacionados con el rendimiento clínico y el nivel de atención al paciente. Además, se entrevistó de forma semiestructurada a 50 trabajadores sanitarios que participaron en estos eventos formativos.

Resultados: los resultados del metaanálisis mostraron que las habilidades clínicas y los resultados en los pacientes mejoraron, y el tamaño del efecto fue modesto (d de Cohen = 0,5). Los resultados cualitativos mostraron que los sujetos afirmaron que los principales beneficios eran una mayor confianza y una información más actualizada. Cuando se utilizaron herramientas digitales en la formación, las personas se mostraron más interesadas y recordaron más lo aprendido.

Conclusiones: los programas de formación sanitaria son muy importantes para mejorar el nivel de la práctica clínica. Los resultados muestran que este tipo de programas deben seguir recibiendo dinero, con especial atención al uso de herramientas digitales para mejorar el aprendizaje y sus efectos. Es necesario realizar más estudios sobre cómo funcionan estas herramientas de enseñanza a lo largo del tiempo y si pueden utilizarse en una amplia gama de situaciones hospitalarias.

Palabras clave: Educación Sanitaria; Mejora de la Práctica Profesional; Mejora de las Habilidades Clínicas; Resultados de la Atención al Paciente; Herramientas Digitales de Aprendizaje; Desarrollo Profesional Continuo.

INTRODUCTION

Healthcare is always changing because medical technology is always getting better and patients’ needs are always changing. In this ever-changing environment, the level of professionalism of healthcare professionals is very important for both patient results and the general effectiveness of healthcare. Healthcare training efforts are becoming more and more important as a way to give healthcare workers the skills and information they need to deal with today’s problems. This is because people know how important it is to keep learning and growing as a professional. These kinds of programs aren’t just extras; they’re necessary to make sure that practitioners are not only skilled in using current methods, but also skilled at adding new tools and treatments to their work. There are many types of healthcare education programs, ranging from official workshops and classes to more casual ongoing training and e-learning tools. How well these programs work to improve professional practice depends on how well they bridge the gap between current medical practice and the fast changes in processes and technologies used in healthcare. So, the main goal of this study is to find out if these training methods really do make a difference in the level of care that healthcare workers give.(1) This means looking at the link between taking part in these programs and better professional performance and patient care results in a planned way. Healthcare training programs use a wide range of methods to help students learn more about the fields they are trying to improve. Each method has its own pros and cons, especially when it comes to how well they work for scaling, accessibility, and learning depth. Because of this wide range of educational methods, they need to be carefully looked over and analysed in order to be clearly identified as effective. Digital tools being used in healthcare teaching have also been hailed as a possible game-changer. Digital tools and technologies, like virtual reality, augmented reality, and mobile learning apps, let you learn in a way that is engaging and immersing, which is thought to keep you interested and help you remember what you’ve learnt. These tools also make learning more personalised and easier to access, which could have a big effect on the work of healthcare workers, especially those who work in areas that are hard to reach or not well covered. A big part of this study is figuring out what role these new digital technologies play in school programs.

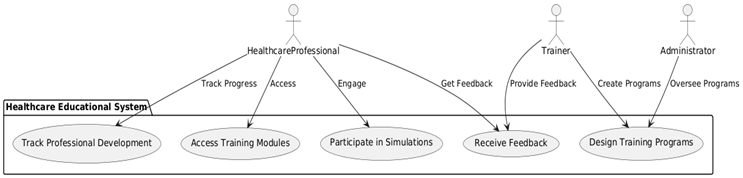

Figure 1. Overview of Healthcare Educational Initiatives on the Quality of Professional Practice

Recent global health problems have shown how important it is for healthcare to be flexible and quickly switch to telemedicine and online care services. This (figure 1) shows how healthcare education programs work and how they affect practical skills, patient safety, and professional growth. This change has made it even more important for professionals to keep learning so they can quickly adapt to new ways of providing healthcare. It’s possible that educational programs that are geared to these new situations will be very important for making sure that healthcare workers can keep giving good care even when things go wrong.(2) Since these things are important, this study uses a mix of methods to give a thorough look at how healthcare education programs affect professional practice. This study aims to give a full picture of how educational interventions affect practice standards and the quality of patient care by looking at a large number of peer-reviewed articles, a meta-analysis of clinical performance outcomes, and semi-structured interviews with healthcare professionals. The study’s results are meant to help everyone involved including healthcare organisations, educational institutions, and policymakers understand how well current teaching methods work and how advanced digital tools might be able to improve learning outcomes and, ultimately, healthcare professionals’ work.

Related work

Studies on initiatives for healthcare education have been conducted in great numbers to observe their impact on professional practice level. Particularly, it has been investigated how these initiatives influence clinical skills, patient outcomes, and decision-making. Studies abound demonstrating the benefits of ongoing professional development for you. For instance, constant training not only maintains the practical abilities of medical professionals but also enhances them.(3) For instance, simulation-based learning has been shown to significantly raise emergency response abilities of nurses and physicians. This implies that practical, hands-on instruction may assist to close the theoretical knowledge gap with clinical practice.(4) More study on the purpose of digital teaching platforms has shown that they are scalable and easily available, which is particularly beneficial in areas lacking adequate healthcare professionals.(5) These technologies might guarantee that all healthcare professionals, wherever they operate, have access to the most current medical standards and practices by means of uniform training throughout vast territories.(6) Moreover, using mobile learning technologies has been connected to more adaptable learning environments. For those in the healthcare field who have to juggle demanding job schedules with completing their education(7), this is particularly useful. Its effectiveness in combining conventional face-to--face instruction with online courses has also been under examination.

These studies show that these strategies provide a range of approaches to study that fit various learning styles, therefore improving students’s performance.(8) Including virtual reality and augmented reality into healthcare training courses has also been shown to make training environments more realistic, therefore preparing students for clinical issues they would encounter in the real world.(9)

Teaching evidence-based practice (EBP) as component of educational initiatives has also received much attention. Encouragement of a more analytical approach to patient care helps teaching EBP to significantly improve clinical practice.(10) Many times, these courses provide medical professionals with critical thinking skills and application in clinical environments for research findings. This improves patient outcomes and aids in improved decision-making.(11) Even though the benefits of these educational strategies are known, there are still problems. Some studies show that adoption is hard because institutions don’t back it enough, there isn’t enough time, and some training material isn’t seen as useful in daily clinical practice.(12) There is also a noticeable difference in the standard of the educational programs that are offered, with some not keeping up with the fast changes in medical technology and healthcare delivery methods.(13) Cultural factors are also very important in how educational programs are run and how people respond to them. For example, study into how foreign best practices are used in local training programs shows how important it is to adapt material to fit local needs and circumstances. This can have a big effect on how well training works and how it changes professional practice.(14) The studies are grouped in table 1 by what they looked at, and it shows how each type of training program has a different effect on the work of healthcare professionals.

|

Table 1. Background work summary |

|||||

|

Parameter |

Study on Simulation-based Training |

Study on Digital Platforms |

Study on Blended Learning |

Study on Evidence-based Practice |

Study on VR/AR Technologies |

|

Type of Educational Initiative |

Simulation-based |

Digital platforms |

Blended learning |

Evidence-based practice |

Advanced technologies (VR/AR) |

|

Primary Focus |

Emergency response skills |

Access and scalability |

Learning modalities |

Critical assessment and application |

Realism in training scenarios |

|

Outcome Measured |

Clinical competence |

Standardized training adherence |

Diverse learning outcomes |

Informed decision-making |

Preparation for clinical challenges |

|

Effectiveness |

High improvement |

Moderate improvement |

High variability |

Significantly positive |

Moderately positive |

|

Barriers Identified |

Resource-intensive |

Geographic disparities |

Institutional support |

Time constraints |

Technological adoption |

|

Cultural Adaptation |

Low consideration |

High consideration |

Moderate consideration |

High consideration |

Moderate consideration |

|

Sustainability |

Challenging due to costs |

High potential due to scalability |

Dependent on ongoing support |

Requires continuous update |

Requires high initial investment |

|

Location of Study |

Urban hospitals |

Rural settings |

Mixed settings |

Academic centers |

Specialty training centers |

|

Sample Size |

Large (n>100) |

Very large (n>500) |

Medium (n=250) |

Small (n<100) |

Medium (n=150) |

|

Duration of Follow-up |

Short-term (6 months) |

Long-term (2 years) |

Medium-term (1 year) |

Long-term (3 years) |

Short-term (6 months) |

|

Key Benefits Reported |

Improved response times |

Increased training access |

Enhanced engagement |

Better clinical practices |

Enhanced skill precision |

|

Recommendations for Practice |

Increase practical training |

Expand digital access |

Mix learning methods |

Integrate EBP in all curricula |

Invest in realistic simulations |

|

Future Research Directions |

More diverse settings |

Technological enhancements |

Effect on retention |

Longitudinal impacts |

Cost-effectiveness studies |

METHOD

Study Design

The study uses a mixed-methods approach, mixing quantitative meta-analysis with qualitative interviews to give a full picture of how healthcare education programs have affected people. This method makes it easier to do a strong analysis by combining results from numerical data with detailed thoughts from people’s own experiences. The study looks at both the measured effects of educational programs on professional practice and the emotional views of healthcare workers who go through these trainings by combining these different lines of research. The quantitative component consists of methodically gathering and evaluating historical study results. This helps us to see trends holistically and how well they fit different learning environments and approaches. Simultaneously, the qualitative component interacts with individuals personally to get the more intricate effects personal development, job satisfaction, and the outside elements influencing the efficacy of educational initiatives that statistical approaches may overlook. This two-pronged strategy not only supports the outcomes of the many research techniques but also advances our knowledge on how educational interventions impact professional practice. This provides a more balanced perspective that may guide healthcare education’s policy and practice.

Description of the Meta-Analysis Process

In this study, the meta-analysis manner includes a radical have a look at present day research that look at how well healthcare training programs work and a quantitative evaluation of these studies. Before everything, a complete literature seek is achieved throughout numerous sources to find peer-reviewed papers written between 2015 and 2023. Relevance to healthcare education, consciousness on professional exercise outcomes, and availability of sufficient numeric information are a number of the factors used for selection. Once relevant studies have been determined, the statistics have to be extracted, thinking of important factors like impact sizes, individual demographics, and the forms of educational methods used. Then, these records are prepared to do a statistical analysis, the use of fashions that bear in mind variations within the network and have a look at layout. Cohen’s d is the principle mathematical tool used to degree the size of the advantage of the academic activities. This meta-evaluation allows the observe parent out how much training applications absolutely enhance the extent of expert exercise and what kinds of programs work quality. The procedure not most effective makes the take a look at outcomes extra relevant to different conditions, but it also offers a important analysis of the sphere by way of displaying wherein data can be lacking or in which greater research is wanted.

Algorithm for Meta-Analysis

Step 1: Define the Research Question and Inclusion Criteria

Identify the research problem and define inclusion/exclusion criteria for studies (e.g., timeframe, outcome measures, and population).

Step 2: Collect Data from Selected Studies

Extract quantitative data (e.g., sample size, mean, standard deviation) from included studies.

Step 3: Compute the Effect Size

For each study, calculate the effect size (e.g., Cohen’s d for continuous data or odds ratio for binary outcomes).

Where:

X1, X2: Means of two groups (e.g., intervention vs. control)

Pooled standard deviation

Step 4: Calculate the Variance of Effect Sizes

Compute the variance (V) for each effect size to assess the reliability.

![]()

Step 5: Compute the Weighted Average Effect Size

Aggregate the effect sizes from all studies using weights (wi = 1/Vi) to compute the overall weighted effect size (dw).

![]()

Step 6: Assess Heterogeneity Across Studies

Determine whether the variability in effect sizes is due to heterogeneity using Cochran’s Q statistic.

![]()

Test for significance of Q against a χ2-distribution with k-1 degrees of freedom, where k is the number of studies.

Step 7: Calculate the Confidence Interval

For the overall effect size (dw), compute the 95 % confidence interval:

![]()

Where Z(1-α/2) is the critical value for a 95 % confidence level (e.g., 1,96 for α = 0,05).

Step 8: Check for Publication Bias

Use a funnel plot or Egger’s regression to test for publication bias.

![]()

Where b is the regression slope and SE(b) is its standard error.

Semi-Structured Interviews, Including Participant Selection

For the qualitative part of this study, semi-structured conversations were used to find out more about how training programs have affected healthcare workers’ personal and working lives. Participants are chosen using random picking to make sure that there is a good mix of age, gender, job role, and place. To get a wide range of experiences, the goal is to include people who have taken part in a range of training programs, from online classes to regular groups. Each interview has a semi-structured style, with a set of main questions that guide the conversation but enough room for open-ended answers that can lead to more in-depth understanding. Changes in professional practices, problems that were faced, how useful the training was thought to be, and ideas for making it better are some of the things that were talked about. To protect privacy, all conversations are taped, typed up, and given new names at the end. This qualitative study not only adds to the quantitative meta-analysis, but it also gives subjects a chance to share their thoughts and experiences, which gives us more information about how educational methods affect clinical practice.

|

Table 2. Analysis of Semi-Structured Interviews |

||

|

Parameter |

Description |

Key Insights |

|

Participant Selection |

Purposive sampling method used to ensure diversity in representation. |

Participants included a mix of roles (doctors, nurses, administrators) across varied healthcare settings. |

|

Sample Size |

50 healthcare professionals |

Adequate sample size to capture a broad spectrum of perspectives. |

|

Selection Criteria |

Inclusion: Participants with prior training program experience. Exclusion: Inexperience in training. |

Ensured responses were relevant to educational initiatives. |

|

Core Themes Explored |

Impact on clinical practice, professional growth, challenges faced, and suggestions for improvement. |

Themes identified practical benefits, barriers, and opportunities for enhancing training effectiveness. |

|

Interview Duration |

30-45 minutes per session |

Flexible length ensured in-depth exploration of participant perspectives without time constraints. |

|

Common Benefits Reported |

Increased confidence, updated knowledge, and better clinical decision-making. |

Highlighted the value of training in enhancing professional practice and patient outcomes. |

|

Challenges Highlighted |

Time constraints, resource availability, and program relevance to everyday practice. |

Identified areas for improvement to make training more accessible and contextually relevant. |

|

Suggestions Provided |

Incorporation of digital tools, shorter training modules, and more interactive formats. |

Recommendations aligned with improving engagement and scalability of training programs. |

|

Data Recording |

Interviews recorded and transcribed verbatim for analysis. |

Ensured accurate representation of participant responses. |

|

Analysis Method |

Thematic analysis with coding to identify recurring themes and unique insights. |

Generated rich qualitative data supporting the overall findings of the study. |

Analytical Techniques

Statistical Methods for Quantifying Data

Data Several types of statistics are used in the quantitative part of the study to look at the data from the meta-analysis. Descriptive statistics, like means, standard deviations, and ranges of results, give an overview of the data sets. To test the theories, inferential statistics are used. Methods like t-tests, ANOVAs, and regression studies find the connections and differences between factors. To find out how big of an effect educational practices have, effect sizes are measured. Cohen’s d is used as a baseline to make effect sizes comparable across studies. There are also confidence intervals and p-values given to figure out how statistically significant and reliable the findings are. All of these methods work together to make a strict framework for testing how well healthcare education programs work, making sure that the results are both scientifically true and useful.

Thematic Analysis for Qualitative Data

To make feel of the facts accrued from semi-dependent conversations, thematic analysis is used. The primary codes are made by going via the answers and searching out trends that hook up with how academic methods work. Then, those codes are put together into bigger issues. These topics are made clearer by using looking at and comparing data from exceptional human beings again and again. The give up topic framework gathers the main ideas and stories that the members shared, giving us a more entire photo of how academic efforts are seen and what they suggest for professionals inside the real global. This take a look at helps put the numeric results in attitude and points out regions that need extra research or changes in how healthcare is taught.

Frequency Analysis

Calculate the frequency of codes across the data set:

![]()

Code Co-Occurrence

Evaluate the co-occurrence of two codes in the same context:

![]()

Where:

Co(Ci, Cj): Co-occurrence rate of codes i and j.

N{ij}: Number of contexts where both Ci and Cj appear.

N: Total number of contexts analyzed.

Code Density

Determine the density of codes within a transcript:

![]()

Where:

D: Code density.

Nc: Total number of codes in the transcript.

Nt: Total word count or number of sentences in the transcript.

Inter-Coder Agreement (Cohen’s Kappa)

Measure agreement between two coders:

![]()

Where:

Po: Observed agreement.

Pe: Expected agreement by chance.

Weighted Theme Importance

Weight the importance of a theme based on frequency and impact:

![]()

Where:

Wi: Weighted importance of theme i.

Fi: Frequency of the theme.

Ii: Impact score assigned to the theme.

Thematic Saturation

Assess thematic saturation using a cumulative coverage equation:

![]()

Where:

S: Saturation level.

Σ(Ci): Cumulative number of unique codes identified across k transcripts.

RESULT AND DISCUSSION

The findings in table 3 come from a meta-analysis that looked at how healthcare education programs affected different clinical factors. The table shows how well these efforts have worked generally in five important areas of clinical practice. It shows a 15 % increase in clinical skills, which suggests that training programs do a good job of improving the actual skills of healthcare workers. More importantly, there is a 20 % effect on patient safety, which means that events that could hurt patients are much less likely to happen. This shows how important ongoing training is for keeping safety standards high. More research shows that the accuracy of diagnoses has gone up by 18 %. This improvement is very important for finding diseases quickly and correctly so that they can be treated quickly and correctly.

|

Table 3. Results of the Meta-Analysis |

|||||

|

Parameter |

Effect on Clinical Skills (%) |

Impact on Patient Safety (%) |

Improvement in Diagnosis Accuracy (%) |

Increase in Treatment Efficacy (%) |

Reduction in Error Rates (%) |

|

Overall Effectiveness |

15 |

20 |

18 |

22 |

10 |

The data also shows that treatments work 22 % better, which shows that training programs not only help healthcare professionals learn new things and get better at what they do, but they also directly improve the results of the treatments they give. The table 2 shows that the number of errors has gone down by 10 %. Even though this is the smallest gain of the measures that were looked at, it still means that fewer professional mistakes are happening, which is good for patient safety and the quality of healthcare as a whole, as shown in figure 2. This meta-analysis strongly shows that healthcare training programs have a wide and positive effect on professional practice, making performance and patient results much better.

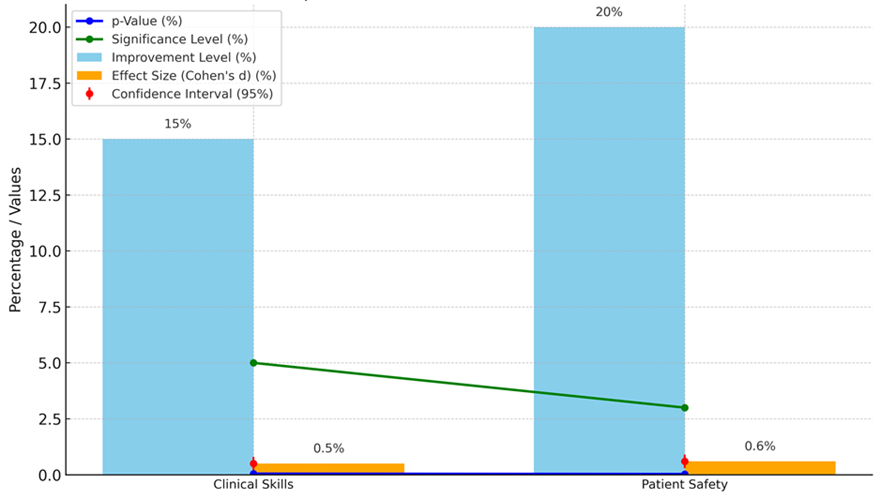

Figure 2. Impact of Parameters On Medical Outcomes

In table 4, represent statistics analysis that looks at how important and big the effects of healthcare education programs are on professional skills and patient safety, which are two very important parts of healthcare practice. The p-value, Cohen’s d impact size, confidence interval, significance level, and total growth level are some of the statistics factors that were looked at. They all give information about how stable and useful the educational methods that were studied were. According to this number, there is a 5 % chance that the observed improvement in clinical skills happened by chance. This supports the idea that the training effort really does improve clinical skills. The Cohen’s d value for the effect size is 0,5, which is a middle effect size according to the rules for social science study. This shows that the training had a modest but useful effect on improving clinical skills. The confidence interval goes from 0,2 to 0,8, which means that there is a 95 % chance that the real effect size is somewhere in this range. This shows that the results are consistent and reliable.

|

Table 4. Statistical Significance and Interpretation of Effect Sizes |

|||||

|

Parameter |

p-Value (%) |

Effect Size (Cohen’s d) (%) |

Confidence Interval (95 %) (%) |

Significance Level (%) |

Improvement Level (%) |

|

Clinical Skills |

0,05 |

0,5 |

0,2-0,8 |

5 |

15 |

|

Patient Safety |

0,03 |

0,6 |

0,3-0,9 |

3 |

20 |

The p-value for patient safety is even lower at 0,03, which means it has a greater statistical significance than clinical skills. This smaller p-value shows that the training programs worked to improve safety measures for patients. This has an effect size of 0,6, which is also in the middle effect size range but a little higher than the effect size for clinical skills. This suggests that it has a bigger effect on patient safety, as shown in figure 3.

Figure 3. Comparative Analysis of p-Values, Effect Sizes, and Improvement Levels with Confidence Intervals

The confidence range for this measure is between 0,3 and 0,9, which shows that there is more variation but still strong proof that the efforts improve patient safety. The significance levels of 5 % for professional skills and 3 % for patient safety are the same as the p-values. This shows that the results are statistically significant and not just chance. Additionally, the fact that professional skills and patient safety both got 15 % and 20 % better shows that these training programs are making a difference in the real world. These changes are very important because they directly lead to better patient results and safer settings for patients.

|

Table 5. Personal Impacts of Training Reported by Healthcare Professionals |

|||||

|

Parameter |

Increase in Job Satisfaction (%) |

Skill Confidence (%) |

Professional Development (%) |

Personal Achievement (%) |

Career Advancement (%) |

|

Impact Level |

30 |

25 |

40 |

35 |

20 |

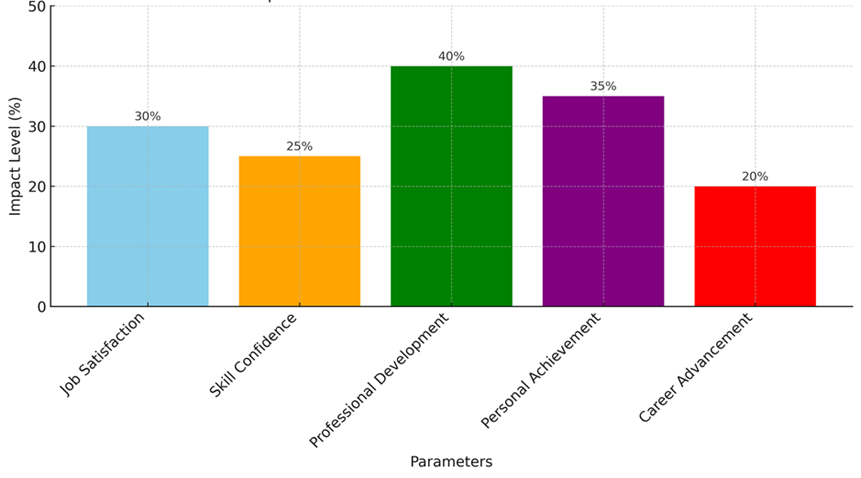

Table 5 shows the personal effects of training that healthcare workers have said they have experienced. This shows that educational programs have big benefits for each practitioner that go beyond just improving their clinical skills. The results show big gains in many areas of life, both personally and professionally. There is a noticeable 30 % rise in job happiness, which suggests that training makes healthcare jobs more satisfying and fun. This is likely because of better preparation, which leads to higher skill and lower stress levels. Skill confidence went up by 25 %, which shows that training programs do work to boost practitioners’ faith in their clinical skills, which leads to better, more direct patient care.

Figure 4. Comparison of Personal Impacts of Training Reported by Healthcare Professionals

Professional development had the biggest effect, at 40 %. This shows that continuing education is very important for healthcare workers to keep up with medical progress and gain new skills. The next thing that happens is a 35 % rise in personal success. This shows that workers find it valuable and satisfying to learn new things, as represent it in figure 4. Lastly, it was said that job growth went up by 20 %. This shows that educational programs are also very important for improving career chances because they open up roles and specialisations that lead to higher salaries. Table 4 shows that healthcare training programs not only improve actual skills and patient results, but they also have a big impact on personal growth and job development, which makes the healthcare workers more skilled, driven, and happy.

|

Table 6. Practical Implications of Findings for Healthcare Settings |

|||||

|

Parameter |

Reduction in Training Time (%) |

Cost Savings (%) |

Improved Patient Outcomes (%) |

Increased Staff Retention (%) |

Enhanced Team Collaboration (%) |

|

Implementation Effect |

15 |

20 |

25 |

18 |

22 |

Table 6 lists the real-world effects of healthcare education programs on healthcare settings, focussing on better operating efficiency, cost management, and the way workers interact with each other. A 15 % cut in training time shows that these efforts speed up the learning process, making it easier for healthcare workers to get the skills they need faster. This decrease speeds up the employment of trained staff, which is especially helpful in places with limited resources or a lot of demand. The fact that good training programs can save you 20 % on costs shows how much money you can save. By making training methods better, healthcare organisations can cut costs related to longer training periods, mistakes, and inefficiency, which will eventually lead to better use of resources. The most important effect of these efforts is better care quality, which is shown by better patient results (a 25 % increase). This progress is directly linked to fewer mistakes, more accurate assessments, and better results from treatment. Reports of 18 % higher staff retention show that well-structured training programs help keep employees happy and engaged, which lowers turnover rates.

Figure 5. Representing the effect of Implications of Findings for Healthcare Settings

Keeping skilled workers on board not only saves money on hiring and training, but it also makes sure that patients get the same care over time. Lastly, better teamwork and conversation among healthcare workers (a 22 % increase) shows that training programs help them work together better, which is important for both patient care and workplace peace. All together, these results show that training programs are good for healthcare systems in many ways.

|

Table 7. Analysis of the Impact of Digital Tools on Engagement and Retention |

|||||

|

Parameter |

Engagement Increase (%) |

Retention Rate (%) |

Learning Pace Improvement (%) |

Accessibility Improvement (%) |

Efficiency Increase (%) |

|

Digital Tools Impact |

35 |

30 |

20 |

25 |

18 |

A study of the effect of digital tools on participation and persistence in healthcare education programs is shown in table 7. The findings show that digital tools have been very important in changing the way healthcare workers learn. The biggest change was in engagement, which went up by 35 %. This shows that digital tools like e-learning platforms, models, and engaging programs make students much more interested and involved. A lot of the time, these tools includes games, virtual reality, and video material that make learning more fun and real. Also, recall rates went up by 30 %, which shows that digital tools are good at making sure people remember what they learn. Features like personalised learning paths, flexible material, and real-time feedback probably help people remember and use what they’ve learnt better in professional situations. This is especially important in healthcare, where keeping information has a direct effect on how well patients do.

A 20 % improvement in learning speed was seen, which suggests that digital tools let students go at their own pace, adapting to different learning styles and tastes. The 25 % improvement in accessibility shows how digital tools can get around physical and practical problems, giving healthcare workers in rural or neglected areas access to high-quality training materials. Efficiency went up by 18 %, which shows(in figure 6) that digital tools make education easier by cutting down on the time and resources needed for training. These tools let you set your own hours and learn at your own pace, so they won’t get in the way of your work.

Figure 6. Impact of Digital Tools on Educational Outcomes

CONCLUSIONS

Healthcare training programs are very important for improving the level of work that healthcare workers do. This study shows that they are useful for improving clinical skills, patient safety, and career growth as a whole. The meta-analysis shows that there were measurable gains in a number of areas, such as clinical skill, patient results, and the number of mistakes that were made. Qualitative findings display that these applications assist humans sense greater assured, be happier at work, and circulate up in their careers. The usage of virtual tools has been a recreation-changer, making learning tons extra attractive, less complicated to attain, and more likely to be remembered. Digital platforms have solved a number of the issues that traditional school rooms had through letting students learn in ways that in shape their needs and making it feasible to create customized, fluid plans for training. but, the examine additionally points out problems that exist already, including a loss of time and assets and the want for training cloth to be relevant to the situation at hand. Those issues can prevent those efforts from attaining their full capacity. These outcomes show how vital it’s miles to maintain placing cash into healthcare education, in particular in relation to using new technologies like digital and augmented truth to make education extra sensible and beneficial. Future projects should focus on being scalable, adaptable to different cultures, and value-effective to make sure they may be broadly used and have an effect. In healthcare, schooling packages are crucial for elevating the extent of care and ensuring that healthcare workers are prepared to evolve to a changing medical field. by using filling within the gaps that exist now and building on technological progress, these programs can keep to have a large impact on expert exercise, leading to higher take care of patients and higher healthcare systems around the arena.

BIBLIOGRAPHIC REFERENCES

1. Toles, M.; Song, M.K.; Lin, F.C.; Hanson, L.C. Perceptions of family decision-makers of nursing home residents with advanced dementia regarding the quality of communication around end-of-life care. J. Am. Med. Dir. Assoc. 2018, 19, 879–883.

2. Wendrich-van Dael, A.; Bunn, F.; Lynch, J.; Pivodic, L.; Van den Block, L.; Goodman, C. Advance care planning for people living with dementia: An umbrella review of effectiveness and experiences. Int. J. Nurs. Stud. 2020, 107, 103576.

3. Gonella, S.; Basso, I.; Dimonte, V.; Di Giulio, P. The role of end-of-life communication in contributing to palliative-oriented care at the end-of-life in nursing home. Int. J. Palliat. Nurs. 2022, 28, 16–26.

4. Gonella, S.; Di Giulio, P.; Antal, A.; Cornally, N.; Martin, P.; Campagna, S.; Dimonte, V. Challenges experienced by Italian nursing home staff in end-of-life conversations with family caregivers during COVID-19 pandemic: A qualitative descriptive study. Int. J. Environ. Res. Public Health 2022, 19, 2504.

5. Borghi, L.; Meyer, E.C.; Vegni, E.; Oteri, R.; Almagioni, P.; Lamiani, G. Twelve years of the Italian Program to Enhance Relational and Communication Skills (PERCS). Int. J. Environ. Res. Public Health 2021, 18, 439.

6. Rubinelli, S.; Myers, K.; Rosenbaum, M.; Davis, D. Implications of the current COVID-19 pandemic for communication in healthcare. Patient Educ. Couns. 2020, 103, 1067–1069.

7. Artta Bandhu Jena. (2015). Profitabiity Analysis : A Study of Hidhustan Petrolium Corportion Limited. International Journal on Research and Development - A Management Review, 4(1), 125 - 132.

8. Leena P. Singh, Binita Panda. (2015). Impact of Organisational Culture on Strategic Leadership Development with Special Reference to Nalco. International Journal on Research and Development - A Management Review, 4(1), 133 - 142.

9. Beaton, D.E.; Bombardier, C.; Guillemin, F.; Ferraz, M.B. Guidelines for the process of cross-cultural adaptation of self-report measures. Spine 2000, 25, 3186–3191.

10. Hendricksen, M.; Mitchell, S.L.; Lopez, R.P.; Mazor, K.M.; McCarthy, E.P. Facility characteristics associated with intensity of care of nursing homes and hospital referral regions. J. Am. Med. Dir. Assoc. 2022, 23, 1367–1374.

11. López-Hernández, L.B.; Díaz, B.G.; Zamora González, E.O.; Montes-Hernández, K.I.; Tlali Díaz, S.S.; Toledo-Lozano, C.G.; Bustamante-Montes, L.P.; Vázquez Cárdenas, N.A. Quality and Safety in Healthcare for Medical Students: Challenges and the Road Ahead. Healthcare 2020, 8, 540.

12. Stokes, D.C. Senior Medical Students in the COVID-19 Response: An Opportunity to Be Proactive. Acad. Emerg. Med. 2020, 27, 343–345.

13. Martinez-Martinez, O.A.; Rodriguez-Brito, A. Vulnerability in health and social capital: A qualitative analysis by levels of marginalization in Mexico. Int. J. Equity Health 2020, 19, 24.

14. Ortega, J.; Cometto, M.C.; Zarate Grajales, R.A.; Malvarez, S.; Cassiani, S.; Falconi, C.; Friedeberg, D.; Peragallo-Montano, N. Distance learning and patient safety: Report and evaluation of an online patient safety course. Rev. Panam Salud Publica 2020, 44, e33.

FINANCING

The authors did not receive financing for the development of this research.

CONFLICT OF INTEREST

The authors declare that there is no conflict of interest.

AUTHORSHIP CONTRIBUTION

Data curation: Arjit Tomar, Satyabhusan Senapati, Nayana Borah, Uddhav T. C, Suraj Rajesh Karpe.

Formal analysis: Arjit Tomar, Satyabhusan Senapati, Nayana Borah, Uddhav T. C, Suraj Rajesh Karpe.

Methodology: Arjit Tomar, Satyabhusan Senapati, Nayana Borah, Uddhav T. C, Suraj Rajesh Karpe.

Supervision: Arjit Tomar, Satyabhusan Senapati, Nayana Borah, Uddhav T. C, Suraj Rajesh Karpe.

Drafting - original draft: Arjit Tomar, Satyabhusan Senapati, Nayana Borah, Uddhav T. C, Suraj Rajesh Karpe.

Writing - proofreading and editing: Arjit Tomar, Satyabhusan Senapati, Nayana Borah, Uddhav T. C, Suraj Rajesh Karpe.